Most commented posts

- Start to Fortran — 1 comment

- Phonons: shake those atoms — 3 comments

Mar 06 2026

It is a yearly habbit, the Hasselt diamond conference with the cryptic name SBDD. It stands for “Surface and Bulk Defects in Diamond”, though few remember as the accronym has been in common use for quite a while. This year, we celebrated the thirtied edition, or XXX using roman numerals. A celebratory edition which was filled with some special events, such as the XXX session (of course that was a fun group quize about the conference, what else did you think?), a caricaturist, a claw machine with SBDD goodies, a photobooth and a scientific poster/image competition. Indeed, the diamond community is a true scientific family when at SBDD.

SBDD XXX conference. Top left: Aylin Melan, Eleonora Thomas and Thijs van Wijk with the group poster. Top right: SBDD XXX poster prize winners. Bottom left: Caricature of Danny Vanpoucke on a beer coaster. Bottom right: Aylin with her prize winning poster.

QuATOMs was present with no less than 3 posters, and this year there was also good company from other theoretical contributors. We presented posters focussing on group-IV defects in diamond as well as our ambitions for the future. There was a huge number of posters (>170), with a lot of interest in modeling of defects. Any conference with poster sessions, also has a posterprize competition. This year, I’m happy to share that Aylin Melan won a Brillian poster prize at SBDD for her theoretical poster on GeV color centers, because of her skills at explaining the topic clearly for a broad (experimental) audience, as well as having a very nice poster. Congratulations Aylin!

Permanent link to this article: https://dannyvanpoucke.be/sbdd-xxx/

Feb 21 2026

During the second semester of the academic year 2025-26 the QuATOMs group has the pleasure to welcome no less than 4 Bachelor intern students from Chemistry, and 2 junior master internships from biomedical sciences, in addition to our master materiomics student Bram Makowski, and Pedro Perrout who joined us from Brazil during the first semester. The coming few months, these students will be introduced into the marvellous world of computational research, and perform their first large true research project.

Welcome to all students of this year

Permanent link to this article: https://dannyvanpoucke.be/interships-of-2025-2026/

Dec 16 2025

| Authors: | Pieter Verding, Danny E.P. Vanpoucke, Yunus T. Aksoy, Tobias Corthouts, Maria R. Vetrano, and Wim Deferme |

| Journal: | Adv. Mater. Technol. XX, YY (2025) |

| doi: | 10.1002/admt.202502104 |

| IF(2025): | 6.2 |

| export: | bibtex |

| pdf: | <AdvMaterTechnol_XX> |

|

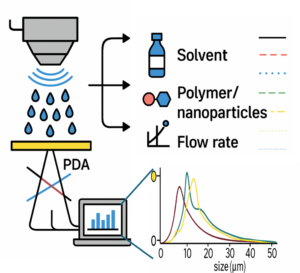

| Graphical Abstract: This study explores how machine learning models, trained on small experimental datasets obtained via Phase Doppler Anemometry (PDA), can accurately predict droplet size (D₃₂) in ultrasonic spray coating (USSC). By capturing the influence of ink complexity (solvent, polymer, nanoparticles), power, and flow rate, the model enables precise droplet control paving the way for optimized coatings in advanced functional materials. |

This study examines droplet formation in ultrasonic spray coating (USSC) as a function of ink formulation (solvent, polymer, nanoparticles). First, acetone with polyvinylidene fluoride (PVDF) at concentrations from 0-4.5 wt% is used to examine the effect of polymer additions. Additionally, acetone-based SiO2 nanofluids (0-10 g/L), are explored. Finally, the combination of both polymer (PVDF) and nanoparticles (SiO2) in acetone is studied. Droplet sizes are measured using Phase Doppler Anemometry under varying atomization power and flow rates. Machine Learning (ML) algorithms are employed to develop droplet size models from key spray parameters, including atomization power, flow rate, polymer concentration, and nanoparticle concentration. The model shows significantly higher accuracy than existing empirical models. The model is further validated on IPA-based inks with polyethylenimine (PEIE) or ZnO nanoparticles, and on acetone–cellulose acetate formulations, confirming its robustness across diverse ink systems. In addition to revealing the influence of coating parameters on the droplet formation and distribution, obtained both via experimental validation and ML, this study demonstrates that ML can be effectively applied to small experimental datasets, offering a robust framework for optimizing droplet formation and understanding key spray parameters in USSC for complex, unexplored inks enabling novel coating applications.

Permanent link to this article: https://dannyvanpoucke.be/2025-paper_mldroplets_pieterverding-en/

Dec 13 2025

| Authors: | Rani Mary Joy, Miquel Cherta Garrido, Omar J.Y. Harb, Hendrik Jeuris, Rozita Rouzbahani, Jan D’Haen, Stephane Clemmen, Dries Van Thourhout, Danny E.P. Vanpoucke, Paulius Pobedinskas, and Ken Haenen |

| Journal: | ACS Materials Lett. 8(1), 137-144 (2026) |

| doi: | 10.1021/acsmaterialslett.5c01218 |

| IF(2023): | 8.7 |

| export: | bibtex |

| pdf: | <ACSMaterialsLett_8> |

|

| Graphical Abstract: Experimental observation of SnV zero-phonon-lines in diamond. |

Group IV color centers in diamond are promising single-photon emitters for quantum information processing and networking. Among them, the tin-vacancy (SnV) center stands out due to its long spin coherence times at cryogenic temperatures above 1 K. While SnV centers have been realized using various fabrication routes, their in situ formation via microwave plasma-enhanced chemical vapor deposition (MW PE CVD) remains relatively unexplored. In this study, SnV centers, identified by a zero-phonon line (ZPL) near 620 nm, were synthesized in nanocrystalline diamond and free-standing microcrystalline diamond using tin oxide (SnO2) as a dopant source at substrate temperatures of 750°C and 850°C. Photoluminescence measurements reveal that lowering the substrate temperature enhances both the ZPL intensity and spatial uniformity of SnV centers. These results highlight substrate temperature as a key parameter for controlling SnV incorporation during MW PE CVD growth and provide insights into optimizing fabrication strategies for diamond-based quantum technologies.

Permanent link to this article: https://dannyvanpoucke.be/2025-paper_snvfabrication_ranimjoy-en/

Sep 24 2025

| Authors: | Goedele Roos, Danny E.P. Vanpoucke, Ralf Blossey, Marc F. Lensink, and Jane S. Murray |

| Journal: | J. Chem. Phys. 163, 114112 (2025) |

| doi: | 10.1063/5.0268712 |

| IF(2023): | 3.1 |

| export: | bibtex |

| pdf: | <JChemPhys_163> |

|

| Graphical Abstract: The Electrostatic Potential of water in different situations. On the left two interacting water molecules are shown, while on the right a water molecule interacting with a protein model representation is shown. |

The electrostatic potential plotted on varying contours (VS) of the electron density guides us in the

understanding of how water interactions exactly take place. Water—H2O—is extremely well balanced, having a hydrogen VS,max and an oxygen VS,min of similar magnitude. As such, it has the capacity to donate and accept hydrogen bonds equally well. This has implications for the interactions that water molecules form, which are reviewed here, first in water–small molecule models and then in complex sites as lactose and its crystals and in protein–protein interfaces. Favorable and unfavorable interactions are evaluated from the electrostatic potential plotted on varying contours of the electronic density, allowing these interactions to be readily visualized. As such, with one calculation, all interactions can be analyzed by gradually looking deeper into the electron density envelope and finding the nearly touching contour. Its relation with interaction strength has the electrostatic potential to be used in scoring functions. When properly implemented, we expect this approach to be valuable in modeling and structure validation, avoiding tedious interaction strength calculations. Here, applied to water interactions in a variety of systems, we conclude that all water interactions take the same general form, with water behaving as a “neutral” agent, allowing its interaction partner to determine if it donates or accepts a hydrogen bond, or both, as determined by the highest possible interaction strength(s).

Permanent link to this article: https://dannyvanpoucke.be/2025-paper-wateresp-roos-en/

Permanent link to this article: https://dannyvanpoucke.be/special-issue-revolutions-in-the-integration-of-artificial-intelligence-and-machine-learning-in-carbon-based-materials-research/

Jul 20 2025

With the planned arrival of 2 more PhD students to the group, it is finally time to make work of a group logo. QuATOMs is no longer the small solitary one-man-show, but has grown in the last three years to 5 eager PhDs and already 3 Post-docs have been part of the team.

The green-on-black colorscheme is inspired by the oldschool terminals, while the mage penguin of course combines a reference to Tux as well as the sometimes magical nature of computational materials research.

Permanent link to this article: https://dannyvanpoucke.be/quatoms-group-logo/

Jul 08 2025

For the second year in a row, we are organising a summerschool with the master materiomics program, oriented at students in their second or third bachelor chemistry or physics. Within this summerschool the students are introduced into the various topics which play an important role in materials research. Today, as part of the quantum pillar, I had the pleasure to introduce the students into the world of computational research, with a focus on the application for quantum mechanical modelling. We learned for example, that it is practically impossible to store the wavefunction of a simple small molecule like benzene, it would require more great deal more than a mole of galaxies in mass to store it. With Density Functional Theory on the other hand, you can easily investigate it on a modern day laptop, as you only need the electron density.

Materiomics summerschool of 2025.

Permanent link to this article: https://dannyvanpoucke.be/summerschool-materiomics/

Jun 27 2025

Latex is one of the nicest tools for formatting documents, but also a rabbit hole when you want to get that one small feature to your liking. Many PhD students discover how months quickly vanish when trying to create the perfect template…and the situation does not improve with age :-). In a recent bout of rabbit chasing, I decided that I wanted to be able to have syntax highlighting for python available for a course syllabus I’m planning. Early searches looked hopefull as a simple to use package seems to be available for the job (and even suitable for other programing languages): minted. Which is promoted by the tutorial page on overleaf with regard to syntax highlighting. Installation is simple using the MikTex Console.(Though the latter first destroyed itself during an update, losing access to the Qtframework. But Once Miktex was reinstalled, installing minted is trivial.) Unfortunately, WinEdt had an issue which was sufficiently vaguely defined: it could be not installed, incorrectly installed, missing in the path, not accessible without a shell-escape, missing some environment variable…

After some searching (shell-escape switched on, added to the path, installing additional python support packages –>?seriously?) it became clear that the package was not installed entirely correctly. A .py script isn’t sufficient in windows, and an additional cmd script was needed (as well as python installation, which was a bit amazing as we are talking about latex, so there should be no need for python, but apparently minted is a python library, wrapped up for latex). So long story short, if you want to work with minted, you should add a small cmd script to the binary folder of your latex install (after “installing minted” using MikTex) which calls your python installation to execute the python script latexminted.py.

@echo off "C:\Path\To\Python\Install\python.exe" "C:\Path\To\MikTex\Minted\latexminted.py" %*

Permanent link to this article: https://dannyvanpoucke.be/highlighting-python-in-latex/

Jun 23 2025

MSc Thesis presentation of Brent Motmans and Eleonora Thomas (master materiomics students 2025). Both presenting applications of ML in materials research: Machine Learning particle sizes using small lab-scale datasets (Brent) and development of Machine Learned Interatomic Potentials for the modelling of (the dynamics of) H-based defects in diamond.

Today we had the MSc presentations of the master Materiomics. The culmination of two year of hard study and intens research activities resulting in a final master thesis paper. This year the QuATOMs group hosted two MSc students: Brent Motmans and Eleonora Thomas. Brent Motmans performed his research in a collaboration between the QuATOMs and DESINe groups, and investigated the application of small data machine learning for the prediction of the particle size of Cu nanoparticles. His study shows that even with a dataset of less than 20 samples a reasonable 6 feature model can be created. As in previous research, he found that standard hyperparameter tuning fails, but human intervention can resolve this issue. Eleonora Thomas on the other hand introduced Machine Learned Interatomic Potentials (MLIPs) into the group. By investigating different in literature available MLIPs, she pinpointed strengths and weaknesses of the different models, as well as the technical needs for persuing such research further in our group. As collatoral, she was able to generate a model for H diffusion in diamond, with an MAE for the total energy of <10meV/atom, competing with models like google deep-mind’s GNoME.

While working on their MSc thesis, Brent and Eleonora also applied for fellowship funding for a PhD position, and we are happy to announce both Brent and Eleonora won their grant, and will be starting in the QuATOMs group as new PhD students comming academic year. Eleonora Thomas will be working on the modelling of Lignin solvation, while Brent will work in a collaboration with the HyMAD group on the modeling of hybrid perovskites.

Permanent link to this article: https://dannyvanpoucke.be/msc-materiomics-defences-new-quatoms-members/