Most commented posts

- Phonons: shake those atoms — 3 comments

- Start to Fortran — 1 comment

Jul 14 2016

The first PC we got at our home was a Pentium II. My dad got it, because I was going to university, and I would be able to do something “useful” with it. (Yup, I survived my entire high school career searching stuff in the library and the home encyclopedia. Even more, Google didn’t even exist before we got our computer, as the company was only founded in 1998 🙂 ). The machine was advertised as state of the art with a clock rate of a whooping 233 MHz! During the decade that followed, the evolution of the clock rates kept going at a steady pace, until it saturated at about 3-4 GHz(15 times faster than the 233 MHz) around 2005. Since then, the clock rate has not increased a bit. If anything, the average clock rate has even decreased to the range 2-3 GHz. As power-consumption grows quadratically with with the clock rate, this means that (1) there is much more heat produced, that needs to be transported away from your CPU (otherwise it get’s destroyed), (2) reducing the clock rate by a factor 2, allows you to power 4 CPU’s at half the clock rate, effectively doubling your calculation power. (There are even more tricks involved in modern CPU’s which crack up performance such that the clock rate isn’t a real measure for performance any longer, and sales people need to learn more new buzzword to sell your computer/laptop 👿 )

The first PC we got at our home was a Pentium II. My dad got it, because I was going to university, and I would be able to do something “useful” with it. (Yup, I survived my entire high school career searching stuff in the library and the home encyclopedia. Even more, Google didn’t even exist before we got our computer, as the company was only founded in 1998 🙂 ). The machine was advertised as state of the art with a clock rate of a whooping 233 MHz! During the decade that followed, the evolution of the clock rates kept going at a steady pace, until it saturated at about 3-4 GHz(15 times faster than the 233 MHz) around 2005. Since then, the clock rate has not increased a bit. If anything, the average clock rate has even decreased to the range 2-3 GHz. As power-consumption grows quadratically with with the clock rate, this means that (1) there is much more heat produced, that needs to be transported away from your CPU (otherwise it get’s destroyed), (2) reducing the clock rate by a factor 2, allows you to power 4 CPU’s at half the clock rate, effectively doubling your calculation power. (There are even more tricks involved in modern CPU’s which crack up performance such that the clock rate isn’t a real measure for performance any longer, and sales people need to learn more new buzzword to sell your computer/laptop 👿 )

Where in 2005 you bought a single CPU with a high clock rate, you now get a machine with multiple cores. Most machines you can get these days have a minimum of 2 cores, with quad-core machines becoming more and more common. But, there is always a but, even though you now have access to multiple times the processing power of 2005, this does not mean that your own code will be able to use it. Unfortunately there is no simple compiler switch which makes your code parallel (like the -m64 switch which makes your code 64-bit), you have to do this yourself (the free lunch is over). Two commonly used frameworks for this task are OpenMP and MPI. The former mainly focuses on shared memory configurations (laptops, desktops, single nodes in a cluster), while the latter focuses of large distributed memory setups (multi-node clusters) and is thus well-suited for creating codes that need to run on hundreds or even thousands of CPU’s. The two frameworks differ significantly in their complexity, fortunately for us, OpenMP is both the easier one, and the one most suited for a modern multi-core computer. The OpenMP framework consists of pragma’s (or directives) which can be added in an existing code as comment lines, and tell a compiler knowledgeable of OpenMP how to parallelize the code. (It is interesting to note that MPI and OpenMP are inteded for parallel programming in either C, C++ or fortran … a hint that what the important programming languages are.)

Where in 2005 you bought a single CPU with a high clock rate, you now get a machine with multiple cores. Most machines you can get these days have a minimum of 2 cores, with quad-core machines becoming more and more common. But, there is always a but, even though you now have access to multiple times the processing power of 2005, this does not mean that your own code will be able to use it. Unfortunately there is no simple compiler switch which makes your code parallel (like the -m64 switch which makes your code 64-bit), you have to do this yourself (the free lunch is over). Two commonly used frameworks for this task are OpenMP and MPI. The former mainly focuses on shared memory configurations (laptops, desktops, single nodes in a cluster), while the latter focuses of large distributed memory setups (multi-node clusters) and is thus well-suited for creating codes that need to run on hundreds or even thousands of CPU’s. The two frameworks differ significantly in their complexity, fortunately for us, OpenMP is both the easier one, and the one most suited for a modern multi-core computer. The OpenMP framework consists of pragma’s (or directives) which can be added in an existing code as comment lines, and tell a compiler knowledgeable of OpenMP how to parallelize the code. (It is interesting to note that MPI and OpenMP are inteded for parallel programming in either C, C++ or fortran … a hint that what the important programming languages are.)

As most modern fortran compilers also are well aware of openMP (you can check which version of openMP is supported here), you generally will not need to install a new compiler to write parallel fortran code. You only need to add a single compiler flag: -fopenmp (gcc/gfortran), -openmp (intel compiler), or -mp (Portland Group). In Code::Blocks you will find this option under Settings > Compiler > Compiler Settings tab > Compiler Flags tab (If the option isn’t present try adding it to “other compiler options” and hope your compiler recognizes one of the flags).

Secondly, you need to link in the OpenMP library. In Code::Blocks go to Settings > Compiler > Linker Settings tab > Link Libraries: add. Where you add the libgomp.dll.a library (generally found in the folder of your compiler…in case of 64 bit compilers, make sure you get the 64 bit version)

Finally, you may want to get access to OpenMP functions inside your code. This can be achieved by a use statement: use omp_lib.

OpenMP contains several functions which allow you to query and set several environment variables (check out these cheat-sheets for OpenMP v3.0 and v4.0).

Notice in the example code above that outside the parallel section indicated with the directives $OMP PARALLEL and $OMP END PARALLEL, the program only sees a single thread, while inside the parallel section 8 threads will run (independent of the number of cores available).

The OpenMP frameworks consists of an set of directives which can be used to manage the parallelization of your code (cheat-sheets for OpenMP v3.0 and v4.0). I will not describe them in detail as there exists several very well written and full tutorials on the subject, we’ll just have a look at a quick and easy parallelization of a big for-loop. As said, OpenMP makes use of directives (or Pragma’s) which are placed as comments inside the code. As such they will not interfere with your code when it is compiled as a serial code (i.e. without the -fopenmp compiler flag). The directives are preceded by what is called a sentinel ( $OMP ). In the above example code, we already saw a first directive: PARALLEL. Only inside blocks delimited by this directive, can your code be parallel.

The program above fills up a large 3D array with random values taken from a predetermined list. The user is asked to set the number of threads (lines 14-16), and the function omp_get_wtime() is used to obtain the number of seconds since epoch, while the function omp_get_wtick() gives the number of seconds between ticks. These functions can be used to get some timing data for each thread, but also for the entire program. For each thread, the starting time is stored in the variable stt. To protect this variable of being overwritten by each separate thread, this variable is declared as private to the thread (line 18: PRIVATE(stt) ). As a result, each thread will have it’s own private copy of the stt variable.

The DO directive on line 21, tells the compiler that the following loop needs to be parallelized. Putting the !$OMP DO pragma around the outer do-loop has the advantage that it minimizes the overhead produced by the parallelization (i.e. resources required to make local copies of variables, calculating the distribution of the workload over the different threads at the start of the loop, and combining the results at the end of the loop).

As you can see, parallelizing a loop is rather simple. It takes only 4 additional comment lines (!$OMP PARALLEL , !$OMP DO, !$OMP END DO and !$OMP END PARALLEL) and some time figuring out which variables should be private for each thread, i.e. which are the variables that get updated during each cycle of a loop. Loop counters you can even ignore as these are by default considered private. In addition, the number of threads is set on another line giving us 5 new lines of code in total. It is of course possible to go much further, but this is the basis of what you generally need.

Unfortunately, the presented example is not that computationally demanding, so it will be hard to see the full effect of the parallelization. Simply increasing the array size will not resolve this as you will quickly run out of memory. Only with more complex operations in the loop will you clearly see the parallelization. An example of a more complex piece of code is given below (it is part of the phonon-subroutine in HIVE):

In the above code, a set of equations is solved using the LAPACK eigenvalue solver ZHEEV to obtain the energies of the phonon-modes in each point of the Brillouin zone. As the calculation of the eigenvalue spectrum for each point is independent of all other points, this is extremely well-suited for parallelization, so we can add !$OMP PARALLEL and !$OMP END PARALLEL on lines 7 and 47. Inside this parallel section there are several variables which are recycled for every grid point, so we will make them PRIVATE (cf. line 7, most of them are work-arrays for the ZHEEV subroutine).

Lines 12 and 44 both contain a write-statement. Without further action, each thread will perform this write action, and we’ll end up with multiple copies of the same line (Although this will not break your code it will look very sloppy to any user of the code). To circumvent this problem we make use of the !$OMP SINGLE directive. This directive makes sure only 1 thread (the first to arrive) will perform the write action. Unfortunately, the SINGLE block will create an implicit barrier at which all other threads will wait. To prevent this from happening, the NOWAIT clause is added at the end of the block. In this specific case, the NOWAIT clause will have only very limited impact due to the location of the write-statements. But this need not always to be the case.

On line 15 the !$OMP DO pragma indicates a loop will follow that should be parallelized. Again we choose for the outer loop, as to reduce the overhead due to the parallelization procedure. We also tell the compiler how the work should be distributed using the SCHEDULE(TYPE,CHUNK) clause. There are three types of scheduling:

From this real life example, it is again clear that OpenMP parallelization in fortran can be very simple.

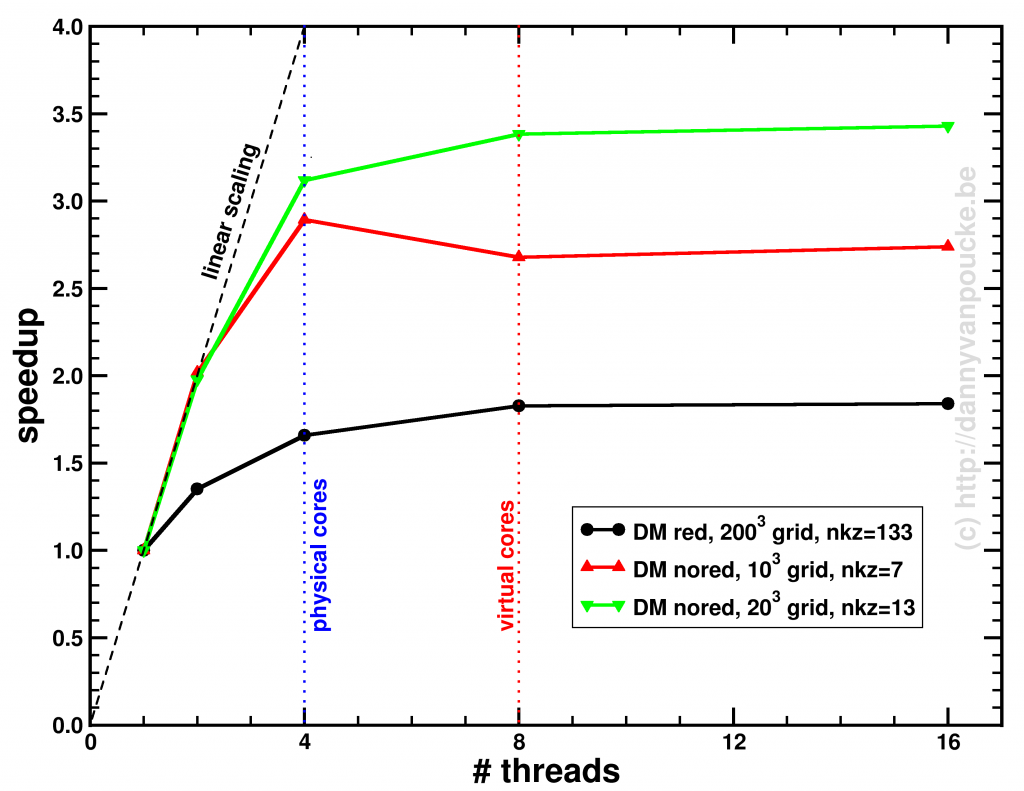

On my loyal sidekick (with hyper-threaded quad-core core i7) I was able to get following speedups for the phonon-code (the run was limited to performing only a phonon-DOS calculation):

Speedup of the entire phonon-subroutine due to parallelization of the main-phonon-DOS loop.

The above graph shows the speed-up results for the two different modes for calculating the phonon-DOS. The reduced mode (DM red), uses a spectrum reduced to that of a unit-cell, but needs a much denser sampling of the Brillouin zone (second approach), and is shown by the black line. The serial calculation in this specific case only took 96 seconds, and the maximum speedup obtained was about x1.84. The red and green curves give the speedup of the calculation mode which makes use of the super-cell spectrum (DM nored, i.e. much larger matrix to solve), and shows for increasing grid sizes a maximum speedup of x2.74 (serial time: 45 seconds) and x3.43 (serial time 395 seconds) respectively. The reason none of the setups reaches a speedup of 4 (or more) is twofold:

In case of the DM nored calculations, the parallelized loop clearly takes the biggest part of the calculation-time, while for the DM red calculation, also the section generating the q-point grid takes a large fraction of the calculation time limiting the effect of parallelization. An improvement here would be to also parallelize the subroutine generating the grid, but that will be for future work. For now, the expensive DM nored calculations show an acceptable speedup.

Jun 19 2016

Current day computers generally have 64-bit processors, and most even have 64-bit operating systems. On such systems, 32-bit programs will run fine, but 64-bit programs can make more efficient use of the underlying system. When we installed a fortran compiler and the code::blocks IDE, the default fortran compiler generated 32-bit programs. This generally is not an issue, unless you need a large amount of memory, for example to store a temporary array with 4003 double precision coordinates (as I did for a project I’m currently working on). You may first start to look for ways of increasing the stack-size of your program, but you will soon discover that the problem is more profound: a 32-bit program cannot access address spacing beyond 4Gb. (In practice, generally you will not even reach 4Gb before running into problems.) This is because the memory address of your data is stored as a 32-bit value (232 = 4 294 967 296 = 4Gb) so the only way out of this predicament is a “larger address” aka 64-bit. So you need to install a new compiler capable of providing 64-bit programs.

May 29 2016

Over the past few weeks I have bumped into several issues each tracing back to numerical accuracy. Although I have been programming for almost two decades I never had to worry much about this, making these events seem as-if the universe is trying to tell me something.

Now, let me try to give a proper start to this story; Computational (materials) research is generally perceived as a subset of theoretical (materials) research, and it is true that such a case can be made. It is, however, also true that such thinking can trap us (i.e. the average computational physicist/chemist/mathematician/… programming his/her own code) with numerical accuracy problems. While theoretical equations use exact values for numbers, a computer program is limited by the numerical precision of the variables (e.g. single, double or quadruple precision for real numbers) used in the program. This means that actual numbers with a larger precision are truncated or rounded to the precision of the variable (e.g. 1/3 becomes 0.3333333 instead of 0.333… with an infinite series of 3’s). Most of the time, this is sufficient, and nothing strange will happen. Even more, most of the time, the additional digits would only increase the computational cost while not improving the results in a significant fashion.

To understand the importance, or the lack thereof, of additional significant digits, let us first have a look at the precision of and the circumference and surface area of a disc. We will be looking at a rather large disc, one with a radius equal to the distance between the sun, and the nearest star, Alpha Centauri, which is 39 900 000 000 000 km away. The circumference of this disc is given by

(or

km ). As a single precision variable

will have about 7-8 significant digits. This means the calculated circumference will have an accuracy of about 1 000 000 km (or a few times the distance between the earth and the moon). Using a double precision

variable, which has a precision of 16 decimal digits, the circumference will be accurately calculated to within a few meters. At quadrupal precision, the

variable would have 34 significant decimal digits, and we would even be able to calculate the surface of the disc (

or

m² ) to within 1 m². Even the surface of a disc the size of our milky way could be calculated with an accuracy of a few hundred square km (or ± the size of Belgium ).

Knowing this, our mind is quickly put at easy regarding possible issues regarding numerical accuracy. However, once in a while we run into one exceptional case (or three, in my case).

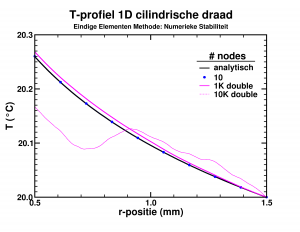

Temperature profile in the insulating layer of a cylindrical wire.

While looking into the theory behind finite elements, I had some fun implementing a simple program which calculated the temperature distribution due to heat transport in an insulating layer. The finite element approach performed rather nicely, leading to good approximate results, already for a few dozen elements. However, I wanted to push the implementation a bit (the limit of infinite elements should give the exact solution). Since the set of equations was solved by a LAPACK subroutine, using 10 000 elements instead of 10 barely impacted the required time (writing the results took most of 2-3 seconds anyway). The results on the other hand were quite funny as you can see in the picture. The initial implementation, with single precision variables, breaks down even worse already at 1000 elements. Apparently the elements had become too small leading to too small variations of the properties in the stiffness-matrix, resulting in the LAPACK subroutine returning nonsense.

So it turns out that you can have too many elements in a finite elements method.

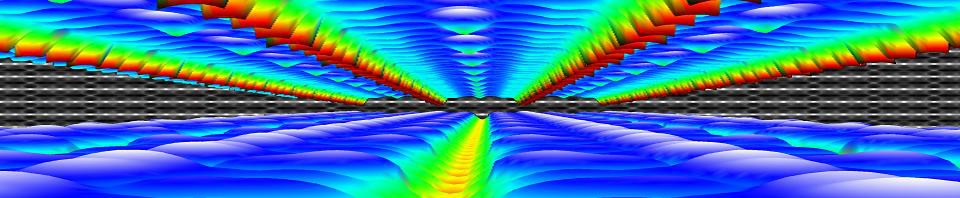

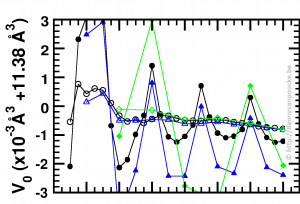

Optimized volume in Equation of State fit, as function of the range of the fitting data, and step size between data-points. green diamonds, blue triangles and black discs: 1% , 0.5% and 0.25% volume steps respectively.

Recently, I started working at the Wide Band Gap Materials group at the University of Hasselt. So in addition to MOFs I am also working on diamond based materials. While setting up a series of reference calculations, using scripts which already suited me well during my work on MOFs, I was trying to figure out for which volume range, and step size I would get a sufficient convergence in my Equation-of-States Fitting procedure. For the MOFs this is a computationally rather expensive (and tedious) exercise, which, fortunately, gives clear results. For the 2-atom diamond unit cell the calculations are ridiculously fast (in comparison), but the results were confusing. As you can see in the picture, the values I obtained from the different fits seem to oscillate. Checking my E(V) data showed nothing out of the ordinary. All energies and volumes were clearly distinguishable, with the energies given with a precision of 0.001 meV, and the volumes with a precision of 0.01 Å3. However, as you can see in the figure, the volume-oscillations are of the order of 0.001 Å3, ten times smaller than our input precision. Calculating the volumes based on the lattice parameters to get a precision of 10-6 Å3 for the input volumes stabilizes the convergence behavior of the fits (open symbols in the figure). This problem was not present with the MOFs since these have a unit cell volume which is one hundred times larger, so a precision of 0.01 Å3 makes the relative error on the volumes one hundred times smaller than was the case for diamond.

In essence, I was trying to get more accurate output than the input I provided, which will never give sensible results (even if they actually look sensible).

The last one is a bit embarrassing as it lead to a bug in the HIVE-toolbox, which is fixed in the mean time.

One of the HIVE-toolbox users informed me that the dosgrabber routine had crashed because it could not find the Fermi-level in the output of a VASP calculation. Although VASP itself gives a value for the Fermi-level, I do not use it in the above sub-program, since this value tend to be incorrect for spin-polarized systems with different minority and majority spins. However, in an attempt to be smart (and efficient) I ended up in trouble. The basic idea behind my Fermi-level search is just running through the entire Density of States-spectrum until you have counted for all the electrons in the system. Because the VASP estimate for the Fermi-level is not that far of, you do not need to run through the entire list of several thousand entries, but you could just take a subset-centered around the estimated Fermi-level and check in that subset, speeding this up by a factor of 10 to 100. Unfortunately I calculated the energy step size between density of states entries as the difference between the first two entries, which are given to with an accuracy of 0.001 eV. I guess you already have a feeling what will be the problem. When the index of the estimated Fermi-level is 1000, the error will be of the order of 1 eV, which is much larger than the range I took into account. Fortunately, the problem is easily solved by calculating the energy step size as the difference between the first and last index, and divide by the number of steps, making the error in the particular case more than a thousand times smaller.

So, trying to be smart, you always need to make sure you really are being smart, and remember that small number can become very big when there are a lot of them.

Apr 06 2016

Optimus Prime, the most prime of all autobots.[source]

2, 3, 5, 7, 11, 13, 17, 19, 23, …and on toward infinity. Prime numbers are a part of mathematics which seduce most of us at some point in our lives to try and “solve them”. There is structure in them, but too little to be easily understandable, and too much to let go and consider them chaos.

Recently, my girlfriend got sucked into this madness. Luckily she is not trying to generate the next largest prime (which would need to have more than 22 Million digits, although I might have jinxed it now). Instead she is looking for order into chaos; leading to pen and paper graphs, evolving into excel worksheets being abused as ordinary bitmap pictures and finally mathematica applications for even more heavy lifting.

These last exercises came to a somewhat grinding halt when mathematica refused to generate a gif-animation (it just failed to do the job without providing any warning that something might have gone awry). However, because it could generate the separate frames without any issues, I was tempted to dig up an old fortran module which allowed me to generate gif images and animations. I remembered correctly that it was able to both read and write gif-images, and so, after 10 minutes of writing a small program (9 minutes remembering how things worked) and another 10 linking in all dependencies and fixing some inconsistencies, I had a working program for generating a gif animation from separate gif images.

The initial test went smoothly. Using some simple gif images I created in paint, the resulting 4 frame animation is the one on the left (which is probably giving you a headache as you read this ;-).

The real test, with the single frame gifs Sylvia had prepared, was rather interesting: It failed miserably. Fortunately it also taught us the origin of the problem: mathematica was generating frames of different dimensions. And although this does not give rise to problems in the workbook or generated avi, it does kill an animated gif. After fixing this, also mathematica successfully generated the animated gif Sylvia wanted to see. Also my own small program was now able to perform the intended trick, although it showed that a different color-map was created for each frame by mathematica, something my own implementation did not handle well (we’ll fix that somewhere in the future).

The executable (including prerequisite dll’s) can be downloaded here.

If you do not suffer from epileptic seizures (or not yet), you could check out the results in the spoiler below.

Mar 09 2016

Today was the first day of the three-day long diamond conference at the university of Hasselt. And although this sounds as-if it is a mere small-scale local conference, it is actually one of the two main international conferences in the field. The Surface and Bulk Defects in Diamond (SBDD) workshop grew in twenty years from a small event with only a few dozen participants to the current event with over 200 participants. As such, it is the place to be, for one as me, who is dipping into a new field of materials.

Today was the first day of the three-day long diamond conference at the university of Hasselt. And although this sounds as-if it is a mere small-scale local conference, it is actually one of the two main international conferences in the field. The Surface and Bulk Defects in Diamond (SBDD) workshop grew in twenty years from a small event with only a few dozen participants to the current event with over 200 participants. As such, it is the place to be, for one as me, who is dipping into a new field of materials.

One thing that already became quite clear today, is the fact that there are many opportunities in this field for the computational materials scientist, as the large majority of the researchers are experimentalists. Of the >120 posters presented, I have only discovered about 5 theoretical ones. Having had very nice chats with their presenters I already learned a lot of what I will have to keep in mind when studying diamond. But so far, I have not come across any issues that are impossible to resolve, which is good news :-).

Mar 08 2016

“One ring to rule them all, one ring to find them, one ring to bring them all, and in the darkness bind them” – J.R.R. Tolkien

With the start of my new chapter, I also decided to finally buy a new laptop. It’s intended to replace the machine I used at work for the past six years, my desktop at home and my current little netbook. Of these the latter has been my true sidekick for the better part of the last decade. Although nearly all my calculations have been performed on various supercomputers in Belgium and The Netherlands, my little Asus Eee-pc 1000H, being one of the last vestiges of windows XP, was the one which I used to write nearly all of my publications, a PhD in Physics and in Chemistry, my FWO project proposal on MOFs, developed most of the HIVE toolbox, wrote the better part of this blog and website, developed and tested the agent-tutorials, and much more. In short, I did (and still do) protect it with my life.

With the unfortunate and in the end terminal shutdown of WIn XP (i.e. some people discovered how to detect the OS and link this to a kill event…just because XP no longer receives updates, and therefore suddenly becomes as leaky as a sieve 🙄 ) a new OS was needed. In addition, I also wanted some more resources for development (and just running a browser…It feels like programming skills are quickly deteriorating these days if you see how memory intensive even trivial applications are), so the new OS ended up being a new computer altogether. After some searching, I found the laptop which I hope to be using for the next decade: an Acer Predator 15. It has a nice intel skylake cpu (dual-threaded quad-core with 6Mb L3 cache, the 8Mb version was not to be found with the 32Gb of RAM I was looking for). The contrast with my netbook could not be greater, so I’ll be checking and comparing performance of my HIVE-code on this new machine to that on my old Asus netbook (dual-threaded atom cpu, with 1Gb of RAM) . This should give some interesting results.

Not having installed a new windows OS since 2007, windows 10 came as quite a shock (and the aftershocks are still coming.) It seems like privacy is a thing of the past. Anything you say, watch, type, draw is by default send to the home office, and distributed to third parties which may be interested in providing you “user specific content” (i.e. commercials 😥 ) This is in stark contrast to the days where people often installed several antivirus programs and firewalls to protect their data from hackers…now we just seem to throw that same information out for grabs. Then to say there are people who believe we are on the verge of the end of capitalism. I’m afraid they either are misreading the signs, or some are reading the signs very accurately and are collecting the new gold before it has been declared gold. I feel like I’m getting old, or just old-school.

One of the advantages of having lived through those early years where fear of internet-hackers and piracy were defaults, is that we also learned to get around all the same protections. Maybe you remember the irony of having to rip a CD to be able to simply listen to it because of all protection-software (and hardware when you rented it at the local library)? It seems those days are back. While installing some old games, I ran into a piracy-protection software (which is no longer supported due to security leaks) which blocked both playing and even installing the game. So you end up either buying the game through steam (as many will suggest you…why should I do that if I bought the game already…twice?) or installing it on an older machine, just brute-force copying the entire installed game, copying/installing missing dll’s and finding a no-cd crack (Yes, it is claimed as illegal, but since most machines these days come without optical drives I beg to differ), or figure out another way to play old games. In the end, you start with a feeling of victory before even playing a new round of Civilizations 4…which is nice and sad at the same time (it should not have been necessary).

Another interesting experience was the transfer of my emails from my UGent address to the UHasselt one. Mail-clients like Outlook and Thunderbird seem not that well adapted to easily handle such exercises (missing folders and emails upon copy-actions, which needed to be fixed manually), especially if you have a very extensive folder-structure. The most nasty problem I encountered when setting up accounts was the fact that the new UHasselt (gmail) account could not be linked to the thunderbird installation (even though the intermediate gmail-account for transferring my UGent mail was not that problematic). Apparently there was also a cookies option embedded in thunderbird itself, which should be switched on, woeps.

Two weeks after the start of my new chapter, most hurdles have been overcome. Nearly all necessary software has been installed (with or without the cooperation of the windows 10 OS 👿 ), my e-mail has been transferred, as well as 4Tb of data on the HPC systems (for which I am infinitely grateful to both the HPC teams of the UGent and UHasselt). Now it is time to start working again. Tomorrow, the diamond conference starts at the UHasselt, giving me the opportunity to quickly get involved in a new, additional field of materials.